Just-In-Time Access: A Zero-Trust Approach to Credentials at Scale

In modern enterprise architecture, the traditional approach of provisioning static service accounts for application-to-database communication is a massive security liability. As organizations move toward Zero Trust, the goal is no longer just securing passwords — it is eliminating them entirely through ephemeral, Just-In-Time (JIT) access.

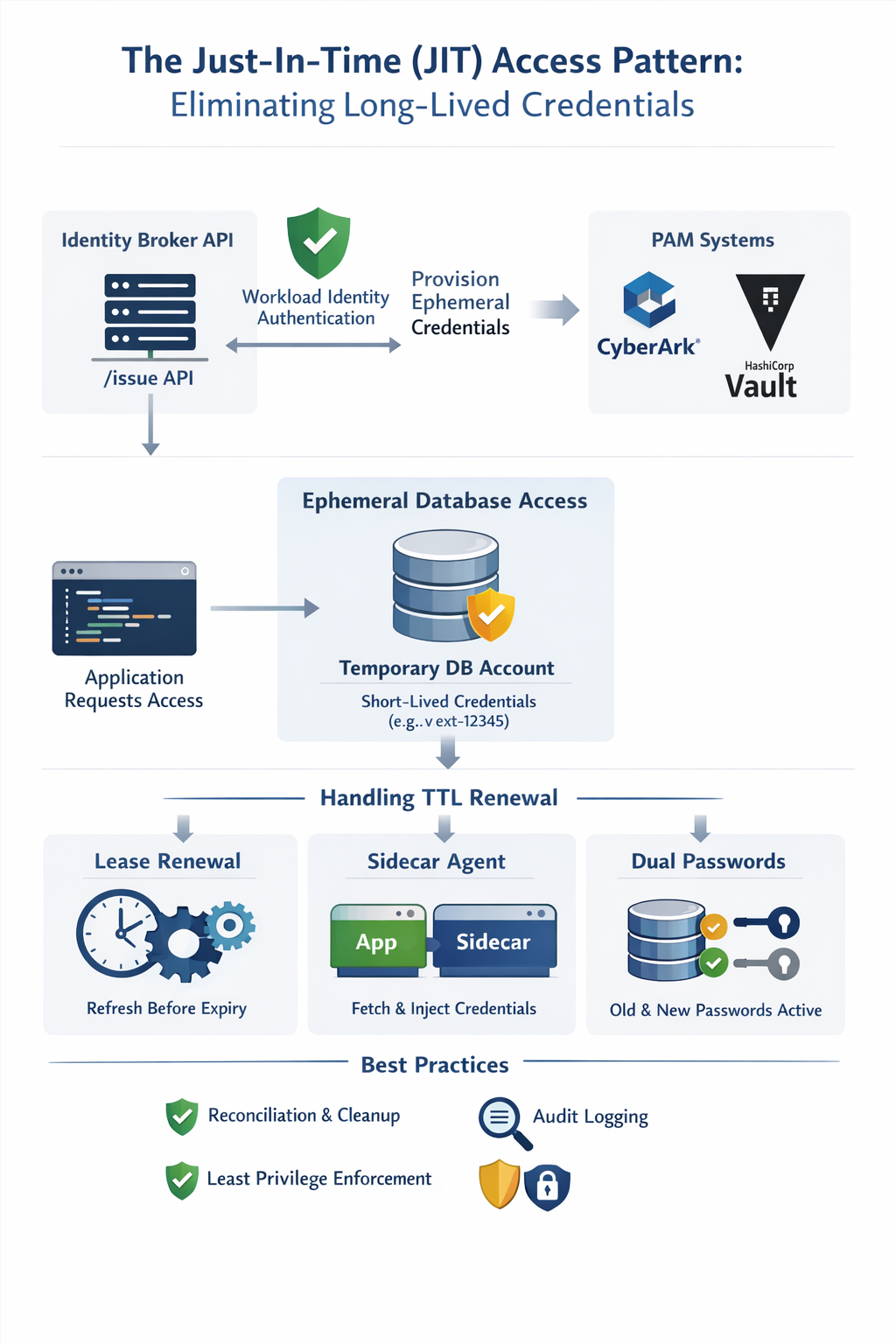

This guide explores how to architect a JIT credential broker (an /issue API), how Privileged

Access Management (PAM) tools like CyberArk and HashiCorp Vault fit into

the ecosystem, and how consuming applications should handle short-lived credentials without dropping active

connection pools.

Problem Statement

For decades, the standard pattern for granting an application access to a database or external system

involved creating a dedicated service account or static credentials as an

application secret (e.g.,

svc_app_prod) in LDAP or

directly in the database. While simple to implement, this approach has become an enterprise-grade

security liability.

This pattern introduces three critical enterprise risks:

-

Standing Privileges & Blast Radius: The account exists 24/7, even when the

application is idle. If an attacker compromises the credentials — via a leaked

.envfile or source code repository — they gain indefinite, undetected access to the database. - The Rotation Nightmare: Rotating a shared database password often requires coordinated application restarts. If the password is changed in the database but not updated in the application's configuration simultaneously, the application crashes.

- Orphaned Accounts: When applications are decommissioned, their service accounts are frequently left behind, creating "ghost accounts" that auditors flag during compliance checks (SOC 2, PCI-DSS).

The core risk is simple: static credentials that never expire are a ticking clock. Every day they exist unused is another day an attacker could be silently using them.

Solution: The Identity Broker API (/issue)

The solution is to introduce a dedicated Identity Broker API — a lightweight

/issue endpoint that acts as the single gatekeeper between all workloads and every target

system (databases, message queues, cloud storage). Applications never hold a password; they hold only

a short-lived lease that expires automatically.

This broker sits on top of enterprise PAM tools like CyberArk Conjur or HashiCorp Vault, which perform the actual dynamic secret generation. The broker adds the policy layer: it enforces which workload identity can request which role on which target, logs every issuance to an immutable audit trail, and ensures cleanup happens even when the PAM engine experiences transient failures.

Core principle: A credential should exist for exactly as long as the work that

requires it — no longer. The /issue API is the mechanism that enforces this.

Why Build a Custom Broker Abstraction Layer?

Enterprise PAM tools like CyberArk Conjur and HashiCorp Vault are powerful — but integrating them directly into every application creates a hidden dependency that is as risky as the static credentials you are trying to eliminate.

Building a thin, organisation-owned broker library that wraps the PAM product behind

your /issue API is the architectural pattern that makes JIT access both sustainable and

enterprise-ready. Here is why this abstraction layer is critical:

No Vendor Lock-In

When an application calls issueApiClient.fetchCredential("inventory_db"), it does not know

whether HashiCorp Vault, CyberArk, or your own LDAP script is running behind the API. The contract is

the /issue endpoint — not the PAM vendor.

If your organisation decides to migrate from HashiCorp Vault to CyberArk Conjur (or vice versa), the change is entirely internal to the broker. Every application across every team continues operating without a single line of application code changing.

Organisation-Specific Rules & Policy Enforcement

Off-the-shelf PAM products enforce their own generic security policies. Your broker layer lets you enforce organisation-specific rules on top of them, such as:

- Workload allow-lists: Only pre-approved workload identities (Kubernetes service accounts, cloud IAM roles) may request credentials for a given target.

- Time-window policies: Certain sensitive databases may only be accessed during business hours; all other requests are rejected at the broker — before they reach the PAM engine.

- Role scoping by environment: A workload tagged

env=stagingis blocked from ever requesting a production database role, regardless of what the PAM product would permit on its own. - Unified rate limiting: Prevent credential-stuffing attacks against the broker by enforcing per-workload request quotas across all PAM backends simultaneously.

Decoupling Applications from PAM Vendors

Without an abstraction layer, each application team integrates the PAM SDK directly. This means:

- Every application must be updated when the PAM vendor releases a breaking SDK change.

- Secrets management logic is scattered across dozens of codebases in different languages.

- Audit and observability tooling must be built separately in every application.

With the custom broker layer, all of that complexity lives in one place. Applications are reduced to a simple HTTP call. The broker owns the PAM SDK, the retry logic, the audit logging, and the vendor-specific credential lifecycle — and it does so once, for everyone.

/issue broker the same way you treat

a payment gateway — it is the single, hardened integration point. Applications should be

consumers of credentials, never managers of them. The broker is where vendor

relationships, organisational policies, and security hardening are concentrated.

The same principle of building an organisation-owned wrapper library applies to federated identity token exchange. In the Google Workload Identity Federation guide, we explore how wrapping the WLIF token exchange behind a custom library removes the direct dependency on Google's credential helper binary — giving your organisation the same benefits covered here: no vendor lock-in, centralised policy enforcement, and the freedom to swap the underlying mechanism without touching application code.

Why Traditional Static Accounts Fail

Why do traditional static accounts fail in modern cloud-native environments? Because Identity and Permissions must be decoupled from permanence.

Creating a random user is useless if that user has no permissions. Conversely, granting permanent permissions to a static user violates the principle of Least Privilege.

Enterprises solve this by utilizing dynamic secrets engines (like CyberArk Conjur or HashiCorp Vault). Instead of relying on static accounts, the architecture shifts to Zero Standing Privileges (ZSP). In this model:

- The account literally does not exist until the exact second it is needed.

- It is granted exact role-based permissions upon creation.

- It is wiped out of existence the moment its Time-To-Live (TTL) expires.

We need a pattern that allows an application to securely request access, receive a temporary credential, and seamlessly renew that credential without dropping active database connections.

Standing Privileges vs. Zero Standing Privileges

| Attribute | Static Service Account | JIT / ZSP Model |

|---|---|---|

| Credential Lifetime | Indefinite (months / years) | Minutes to hours (TTL-bound) |

| Rotation Requirement | Manual, disruptive | Automatic, seamless |

| Blast Radius on Compromise | High — unlimited access window | Minimal — expires within TTL |

| Ghost Account Risk | High | Near-zero (auto-deleted) |

| Compliance Auditability | Difficult (shared accounts) | Full traceability per workload |

How to Pattern

To implement this, we introduce an Identity Broker API (/issue) that sits

between the consuming application and the enterprise PAM system / LDAP. The architecture is broken down

into three phases.

Phase 1: Workload Identity — Authenticating to the Broker

Before the /issue API generates a database password, it must verify who is asking.

The API should never rely on static API keys. Instead, it should leverage

Workload Identity Federation (e.g., SPIFFE/SPIRE, Kubernetes Service Account OIDC tokens,

or AWS IAM Roles).

The application presents a short-lived cryptographic token to the /issue API,

proving its identity. This eliminates the chicken-and-egg problem of needing a static secret to get a

dynamic secret.

/issue API authenticates the

workload identity (e.g., a Kubernetes service account or a cloud IAM role), not a human user.

This makes the credential lifecycle fully automated and auditable.

Phase 2: The Dynamic Provisioning Architecture

Once authenticated, the /issue API orchestrates the creation of the credential using one of

two methods:

Method A: The Database-Direct Method (Bypassing LDAP)

The secrets engine logs directly into the target database using a highly privileged "Role Manager" account. It executes a dynamic SQL statement to create an ephemeral user and immediately grants it a pre-existing role.

-- Step 1: Create the ephemeral user with a random password

-- NOTE: password value uses single quotes (string literal);

-- double quotes in PostgreSQL are identifier delimiters, not string delimiters

CREATE USER "v-ext-12345" WITH PASSWORD 'a8f#Kz!9pL';

-- Step 2: Grant a pre-existing, scoped role (never grant raw table permissions)

GRANT app_read_only TO "v-ext-12345";

-- Step 3: Database-enforced TTL as a hard safety net

ALTER USER "v-ext-12345" VALID UNTIL '2025-01-01 10:00:00';

-- On TTL expiry: the PAM system executes cleanup

REVOKE app_read_only FROM "v-ext-12345";

DROP USER "v-ext-12345";

Method B: The LDAP Group Mapping Method

If enterprise policy mandates that all identities live in Active Directory, the engine

creates a temporary user in a specific Organizational Unit (OU) and adds that user to an

AD Group (e.g., GRP_DB_Inventory_ReadWrite).

The database authenticates the user via LDAP and grants access based on group membership — the database itself never needs to know about the ephemeral user's creation or deletion.

- Provisioning: Create user in

OU=Temporary_Access→ Add toGRP_DB_Inventory_ReadWrite - Revocation: Remove from the AD group → Delete the temporary OU entry

Phase 3: The Consuming Application Pattern (Handling the TTL)

If the /issue API grants a password with a 60-minute TTL, how does a

long-running Spring Boot or Node.js application survive the rotation?

The key insight is that databases generally do not terminate active TCP connections when a

password changes — they only reject new connection attempts.

Pattern A: The Proactive "Lease Renewal" — The 80% Rule

This is the industry standard for long-running microservices.

- The application fetches the credential with a 60-minute TTL and initializes its database connection pool (e.g., HikariCP).

- A background thread is scheduled to wake up at 80% of the TTL (minute 48).

- At minute 48, the application calls the

/issueAPI to fetch the next password. - The application gracefully updates the connection pool configuration with the new password.

maxLifetime must be set

shorter than the TTL (e.g., 45 minutes). This forces the pool to retire old connections and

establish new ones using the fresh password before the original password expires at minute 60.

// Spring Boot + HikariCP: Proactive Lease Renewal (Pattern A)

@Service

public class JitCredentialService {

private final HikariDataSource dataSource;

private static final long RENEWAL_DELAY_MINUTES = 48; // 80% of 60-min TTL

@PostConstruct

public void initializeAndScheduleRenewal() {

CredentialResponse cred = issueApiClient.fetchCredential("inventory_db");

applyCredentialToPool(cred);

ScheduledExecutorService scheduler = Executors.newSingleThreadScheduledExecutor();

scheduler.scheduleAtFixedRate(

this::renewCredential,

RENEWAL_DELAY_MINUTES, RENEWAL_DELAY_MINUTES, TimeUnit.MINUTES

);

}

private void renewCredential() {

CredentialResponse fresh = issueApiClient.fetchCredential("inventory_db");

applyCredentialToPool(fresh);

log.info("JIT credential rotated successfully at {}", Instant.now());

}

private void applyCredentialToPool(CredentialResponse cred) {

// maxLifetime set in application.properties:

// spring.datasource.hikari.max-lifetime=2700000 (45 min in ms)

dataSource.getHikariConfigMXBean().setPassword(cred.getPassword());

}

}

Pattern B: The Sidecar / Agent Pattern

To avoid writing lease-renewal logic into every microservice, enterprises deploy a

Sidecar container alongside the application pod. The Sidecar is solely responsible for

authenticating with the /issue API and writing fresh credentials to an

in-memory volume (e.g., /dev/shm/db-creds).

The application code remains completely unaware that the password is rotating.

# Kubernetes Pod spec: Sidecar credential injector (Pattern B)

spec:

volumes:

- name: db-creds

emptyDir:

medium: Memory # in-memory only, never written to disk

initContainers:

- name: credential-init

image: corp/jit-broker-agent:latest

command: ["sh", "-c", "jit-agent fetch --target inventory_db --out /creds/db.env"]

volumeMounts:

- mountPath: /creds

name: db-creds

containers:

- name: app

image: corp/inventory-service:latest

volumeMounts:

- mountPath: /creds

name: db-creds

- name: credential-sidecar

image: corp/jit-broker-agent:latest

command: ["sh", "-c", "jit-agent renew --interval 48m --out /creds/db.env"]

volumeMounts:

- mountPath: /creds

name: db-creds

Pattern C: Dual Passwords (Database-Side)

For legacy applications that cannot gracefully rotate connection pools, modern databases

(like MySQL 8+ and Oracle) support Dual Passwords.

The PAM system issues a new password but retains the old one for an

overlapping window (e.g., 10 minutes), preventing

AuthenticationException errors during the race condition of a password swap.

-- MySQL 8+: Dual Password rotation (Pattern C)

-- Set new primary password, keep old as secondary for 10-min overlap

ALTER USER "v-ext-12345"@"%" IDENTIFIED BY "newSecurePassword!" RETAIN CURRENT PASSWORD;

-- After the overlap window, discard the old secondary password

ALTER USER "v-ext-12345"@"%" DISCARD OLD PASSWORD;

Enterprise Best Practices

Building the /issue API is only half the battle. To make this system production-ready and

compliant, the following best practices must be implemented.

1. Implement Reconciliation Loops (The Cleanup Failsafe)

The creation of a temporary account is a feature; the guaranteed deletion of that account is the security. If the PAM system or LDAP server experiences a network blip exactly when a TTL expires, the deletion command will fail, leaving an active "ghost account" behind.

Best Practice: Implement an out-of-band reconciliation cron job that

runs periodically (e.g., every hour). This job scans the database or the

OU=Temporary_Access LDAP folder and forcefully deletes any account whose creation timestamp

exceeds the maximum allowed TTL.

-- Reconciliation query: find and forcefully clean up ghost accounts

-- Scheduled as an independent cron job (every hour)

SELECT usename

FROM pg_catalog.pg_user

WHERE usename LIKE "v-ext-%"

AND valuntil < NOW();

-- For each row returned, execute cleanup:

-- REVOKE app_read_only FROM "v-ext-XXXXX";

-- DROP USER "v-ext-XXXXX";

2. Audit Traceability (Mapping Ephemeral to Persistent)

When an auditor sees that user v-ext-12345 dropped a database table, that information is

useless unless they know which application was using that ID.

Best Practice: The /issue API must maintain an

immutable audit log mapping the ephemeral ID to the Workload Identity:

// Immutable audit log entry (append-only Firestore / SIEM)

{

"timestamp": "2025-01-01T09:00:00Z",

"workload": "payment-service-prod",

"k8s_namespace": "production",

"k8s_pod": "payment-service-7d8f9",

"target_db": "inventory_db",

"ephemeral_id": "v-ext-12345",

"ttl_minutes": 60,

"expires_at": "2025-01-01T10:00:00Z",

"issued_by": "jit-broker/issue-api v2.3.1"

}

3. Scope the Broker's Privileges (Least Privilege)

The service account used by your /issue API to create temporary users is essentially a

"God-Mode" account. If compromised, an attacker could create permanent backdoor accounts.

Best Practice: Use strict LDAP Delegation or

Database Role scoping. The broker's service account must only have permissions to

CREATE and DELETE users within a specific, isolated LDAP OU, and can only

GRANT a predefined list of approved roles.

-- PostgreSQL: Scope the broker role to ONLY what it needs

GRANT CREATEROLE TO jit_broker_role;

-- Broker can only grant pre-approved application roles

GRANT app_read_only TO jit_broker_role WITH GRANT OPTION;

GRANT app_read_write TO jit_broker_role WITH GRANT OPTION;

-- Explicitly deny elevated privileges

ALTER ROLE jit_broker_role NOSUPERUSER NOREPLICATION NOCREATEDB;

4. Resiliency and Exponential Backoff

If the /issue API goes down, applications cannot boot, and running applications will

eventually crash when their connection pools expire.

Best Practice: Consuming applications must implement retry logic with

exponential backoff when calling the /issue API. Because the app attempts to renew

at the 80% mark, it has a 20% buffer window (e.g., 12 minutes) to keep retrying the API

before the database severs the connection.

// Spring Retry: Exponential backoff for /issue API calls

@Retryable(

value = { IssueApiException.class },

maxAttempts = 5,

backoff = @Backoff(delay = 2000, multiplier = 2.0, maxDelay = 30000)

)

public CredentialResponse fetchCredential(String targetDb) {

return issueApiClient.issue(targetDb);

}

@Recover

public CredentialResponse onIssueApiFailure(IssueApiException ex, String targetDb) {

log.error("CRITICAL: /issue API unreachable after max retries for {}", targetDb);

alertingService.triggerP1Alert("jit-broker-unreachable", targetDb);

throw new CredentialUnavailableException("Cannot obtain JIT credential", ex);

}

Conclusion

Transitioning to Just-In-Time access is not merely a password rotation strategy; it is a

fundamental shift in enterprise architecture. By forcing applications to request ephemeral access via an

/issue broker, organizations achieve true Zero Standing Privileges.

While the creation of a temporary account is straightforward, the architectural complexity lies in:

- The guaranteed revocation of that access (reconciliation loops).

- The graceful handling of connection pools during rotation (the 80% renewal rule).

- The robust auditing required for compliance (immutable ephemeral-to-workload mapping).

Whether utilizing enterprise tools like CyberArk Conjur or building a custom LDAP-brokered API, designing for the cleanup and rotation lifecycle is what separates a fragile script from a resilient, production-ready enterprise security pattern.

🔐 Eliminate Static Credentials Entirely

Combine this JIT pattern with Workload Identity Federation to remove long-lived Google Service Account keys from your stack entirely. Check out our guide on Google Workload Identity Federation to complete your Zero Trust journey.

Implementation FAQ & Common Pitfalls

Frequently Asked Questions

What happens if the /issue API is unavailable at startup?

The application should fail fast and not start. A retry with exponential backoff (up to a reasonable timeout, e.g., 2 minutes) is acceptable. Do not fall back to a cached static credential — that defeats the purpose of JIT.

Can I use this pattern with non-database targets (e.g., S3, Kafka)?

Yes. The same pattern applies: the /issue API generates a short-lived IAM

role assumption token (for S3) or a temporary Kafka ACL user. The TTL and renewal

logic remain identical.

What is the difference between CyberArk Conjur and HashiCorp Vault?

Both are mature enterprise PAM solutions. CyberArk Conjur is typically chosen by organizations with existing CyberArk PAM investments and strong LDAP/AD integration requirements. HashiCorp Vault is preferred in cloud-native or multi-cloud environments due to its extensive secrets engine ecosystem (AWS, GCP, Azure, databases) and its open-source community.

How short should the TTL be?

Industry practice: 1 hour for most application-to-database credentials.

Mission-critical systems with strong renewal automation may use 15 to 30 minutes.

The TTL must always exceed your connection pool's maxLifetime.

Does the database terminate active connections when the password changes?

No — this is the key property that makes this architecture viable. In PostgreSQL,

MySQL, and Oracle, existing authenticated sessions remain open after a password change.

Only new connection attempts must use the new password. The pool's

maxLifetime setting drives the graceful cycling of old connections.